Why this matters for technical SEO practitioners right now

Most brands treating Perplexity as “just another search engine” are misreading the mechanism entirely. Perplexity does not rank pages. It selects sources. That distinction changes everything about how you optimise.

Query volume on Perplexity crossed 100 million monthly searches in late 2024. Its user base skews toward researchers, analysts, and early-adopter professionals. Brands Perplexity cites get surfaced to exactly the audience most likely to act on recommendations. Most SEO work in 2025 still treats traditional Google ranking signals as the primary lever. Wrong lever entirely.

What follows is a technical breakdown of how Perplexity selects cited sources, what patent-documented mechanisms govern LLM-based retrieval systems, and what concrete changes a technical SEO practitioner can make to increase citation probability.

How Perplexity actually selects sources

Perplexity operates as a retrieval-augmented generation (RAG) system. That is the foundational architecture distinction separating it from a standard search engine, and it is why Google ranking tactics transfer poorly here.

In a RAG system, the model retrieves candidate documents before generating a response. The generated answer is then grounded against those retrieved documents, with citations attributed to specific passages. The retrieval step uses a combination of web crawling, via PerplexityBot, and semantic vector search against an internal index.

The patent-documented mechanism most relevant here is Google’s US Patent 10,394,882, “Retrieval of Relevant Documents Based on Semantic Similarity,” filed July 18, 2016, by Quoc V. Le and Tomáš Mikolov. It predates Perplexity but describes the phrase-vector retrieval architecture underlying every modern RAG implementation. Understanding it explains why Perplexity’s source selection behaves the way it does.

The patent describes a system where “documents are ranked by their semantic distance from a query vector.” Not keyword match. Semantic distance. A document using topically proximate language, co-occurring domain terms, and consistent entity references will be retrieved with higher probability than one matching exact keywords but lacking semantic coherence.

Applied to Perplexity: consistent publication of content with coherent entity relationships, domain-specific co-occurring phrases, and citable factual claims raises the probability of appearing in the candidate selection phase. Getting retrieved is step one. Being cited is step two. Most optimisation work focuses on neither.

The crawlability problem most brands miss

PerplexityBot crawls independently of Googlebot.

If your robots.txt allows Googlebot and blocks unrecognised user-agents, PerplexityBot is blocked. Most enterprise robots.txt configurations do exactly this. Pull up yourdomain.com/robots.txt now and check whether PerplexityBot appears in an Allow directive. It probably does not.

The PerplexityBot user-agent string is PerplexityBot/1.0. You need an explicit Allow directive if your configuration defaults to Disallow for unlisted bots. Five-minute fix. Potentially significant citation impact.

Perplexity’s crawler prioritises content freshness. Pages last crawled more than 90 days ago receive lower retrieval weight during time-sensitive queries. Publishing cadence matters here, not as a content-volume play, but as a crawl-recency signal.

What makes a page citable

Retrieval gets your content into the candidate set. Citation happens when the RAG system can extract a specific, attributable passage that directly answers the query.

Three structural properties determine whether a retrieved page gets cited.

Passage-level answerability. Perplexity’s citation mechanism operates at the passage level, not the page level. A 3,000-word article with a single citable fact buried in paragraph fourteen will be cited less reliably than a 600-word post where every paragraph contains a standalone claim. Structure your content so that any 150-word block can be extracted and read as a complete answer to a specific question.

Factual density with source attribution. RAG systems favour passages with verifiable claims: numbers, dates, named entities, defined relationships. Vague qualitative statements score poorly in passage retrieval. “Studies suggest that X improves Y” is retrievable. “Ahrefs’ 2024 study found that 76.4% of featured snippet URLs appear in position 1 to 10” is citable. The difference is specificity and attribution traceability.

Named entity consistency. If your brand appears as three different name forms across your own pages, the knowledge graph treats those as ambiguous entity references. Pick one canonical name and use it consistently across every page, author bio, About section, and external profile. Consistency is how a RAG system learns to associate citations with your brand node.

The structural content changes that raise citation probability

Specific changes tied to how passage retrieval works, not general content advice.

Use question-format heading structures. Perplexity frequently surfaces content in response to natural language questions. An H2 reading “How does retrieval-augmented generation select sources?” is more directly retrievable than “Our approach to RAG.” The heading functions as a semantic anchor for the passage beneath it.

Write short, citable paragraphs under question H2s. Three to four sentences per paragraph. The first sentence carries the core claim. The second and third support it. The fourth is optional. This is not a readability recommendation, it is a passage extraction constraint. RAG systems segment documents into retrievable chunks, and paragraph breaks are natural segmentation points.

Add structured data. Specifically FAQPage and HowTo schema. These markup types signal passage-level answerability to any system reading your structured data. FAQ schema in particular mirrors the question-answer retrieval pattern underlying most Perplexity queries.

Publish primary research, even small-scale. Original data with a byline gets cited disproportionately. A survey of 50 Malaysian businesses about their AI search experience, published with methodology and findings, will be retrieved and cited far more than a synthesis of existing industry data. RAG systems, when surfacing multiple candidate passages on a topic, prioritise the one that cannot be found elsewhere. Uniqueness is a retrieval signal. I have seen this hold even for datasets most practitioners would consider too small to publish.

Claim your brand entity on external knowledge bases. Wikipedia is the obvious one. Wikidata is more important and more often ignored. Crunchbase, LinkedIn Company Page, and your Google Business Profile all contribute to entity disambiguation. Perplexity’s system needs to resolve your brand name to a stable entity node before it can reliably attribute citations to you. Without that entity grounding, citations may appear but won’t be associated with your brand in any trackable way.

The domain authority transfer problem

Perplexity’s source weighting does not fully correlate with Domain Rating.

High-DR sites get cited frequently. But they get cited because they tend to satisfy the conditions above: passage-level answerability, factual density, entity consistency. Not because the citation algorithm reads Ahrefs DR.

Perplexity’s own published guidance on PerplexityBot indicates the system favours sources with consistent citation patterns from other authoritative pages. Structurally similar to PageRank-style link equity, but different enough that chasing backlinks as a Perplexity optimisation strategy is misdirected. The backlinks worth acquiring are ones that signal topical authority within your specific domain, because those co-occur with exactly the entity and phrase relationships that improve semantic retrieval.

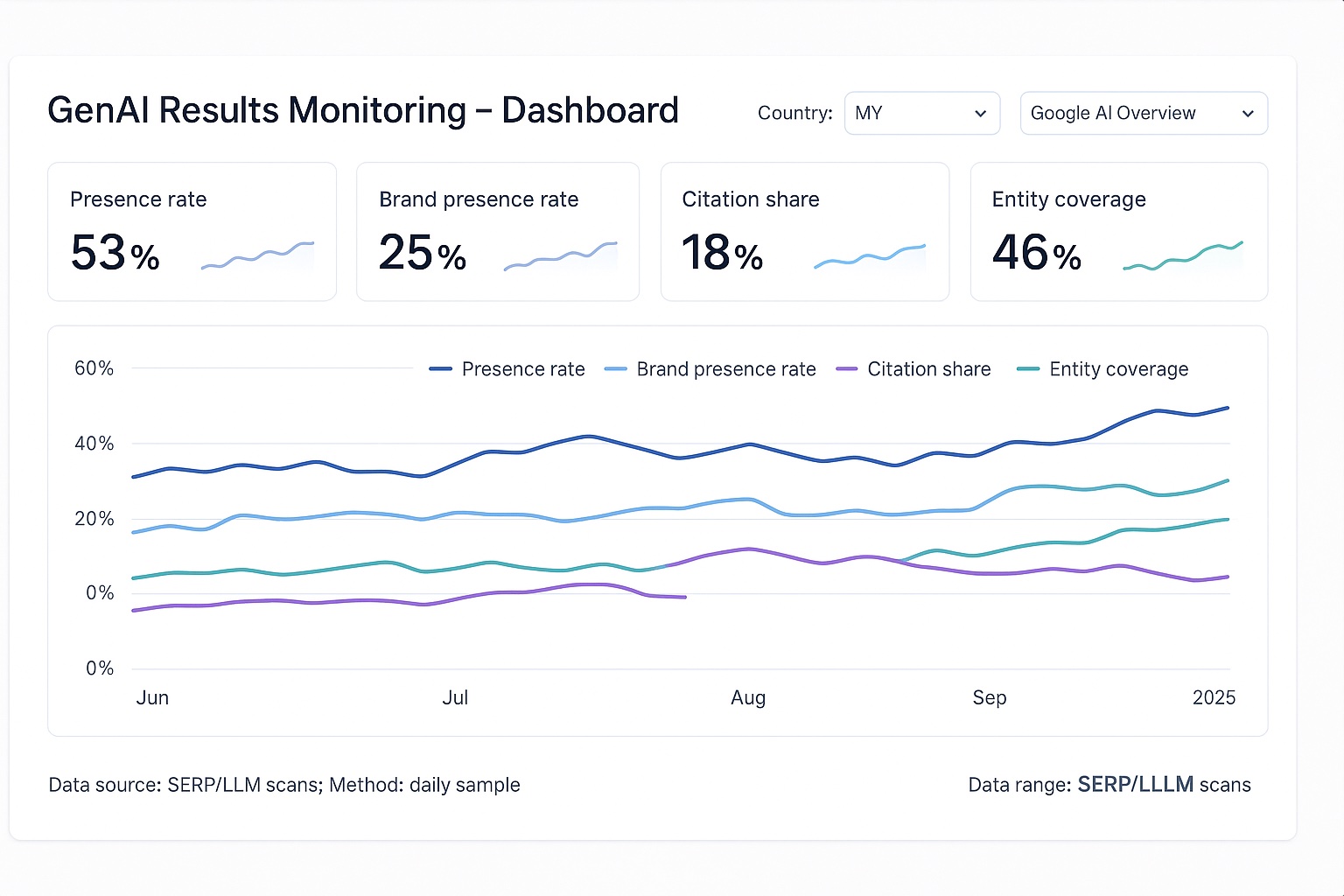

Monitoring whether it is working

Standard rank tracking tools cannot measure Perplexity citation performance. No impression data surfaces in Search Console for Perplexity traffic. Measurement requires two methods running together.

First, set up PerplexityBot in your server log analysis. Filter by user-agent string, create a segment for PerplexityBot/1.0, and monitor crawl frequency by URL. Pages crawled more frequently are being indexed more actively. Crawl frequency is a leading indicator of citation probability.

Second, run manual query tests. Identify the 10 to 15 queries most directly relevant to your brand’s subject matter expertise. Run each weekly in Perplexity. Record which sources are cited. When your domain appears, note the exact passage cited. That tells you which content is working and which structural pattern produced it.

Otterly.AI and Profound now offer automated tracking of brand mentions across generative AI platforms including Perplexity. Neither is a complete solution. Both give directional signal faster than manual querying at scale.

How to apply this in sequence

Audit robots.txt for PerplexityBot access first. Five minutes, eliminates the most common blocking issue immediately.

Identify your three to five highest-expertise content pages. Restructure them to question-format H2s with short, claim-first paragraphs beneath each heading. Add FAQPage schema to each.

Publish one piece of original research, however modest in scope. A simple aggregation of publicly available Malaysian market data, presented with original framing and a clear methodology, counts.

Establish a canonical entity name across all external knowledge bases. Wikidata entry if you do not have one. Consistent brand name form everywhere else.

Set up server log monitoring for PerplexityBot crawl frequency and begin weekly manual query testing for your core topic set.

The core finding: Perplexity selects sources through passage-level semantic retrieval, not page-level ranking. Citation probability is determined by content structure, entity consistency, and factual specificity. The traditional authority signals dominating Google optimisation are, at best, indirect here.

AI Mode is an AI SEO agency that helps you get cited on Perplexity and other GEO surfaces. Reach out to get started.